When code becomes cheap, judgment becomes the bottleneck.

There is a new fantasy moving through software teams.

It is the fantasy of frictionless production.

A prompt goes in. A feature comes out. A backlog shrinks. A prototype appears before the coffee cools. The screen fills with convincing structure. Functions, tests, interfaces, handlers, adapters, migrations. The sensation is intoxicating. It feels like leverage. It feels like multiplication. It feels, above all, like progress.

And in one narrow sense, it is.

AI-assisted development really does compress the distance between intention and artifact. It reduces typing. It accelerates scaffolding. It lowers the threshold for experimentation. It makes many forms of software production feel astonishingly light.

But that is only the first movement of the system.

The second movement is quieter.

Because software has never been made of code alone. It has always also been made of judgment. Of architecture. Of historical memory. Of the strange, embodied understanding that lives inside experienced engineers who know not only what a system does, but why it became this way, where it is fragile, and what will break if one innocent line is changed in the wrong place.

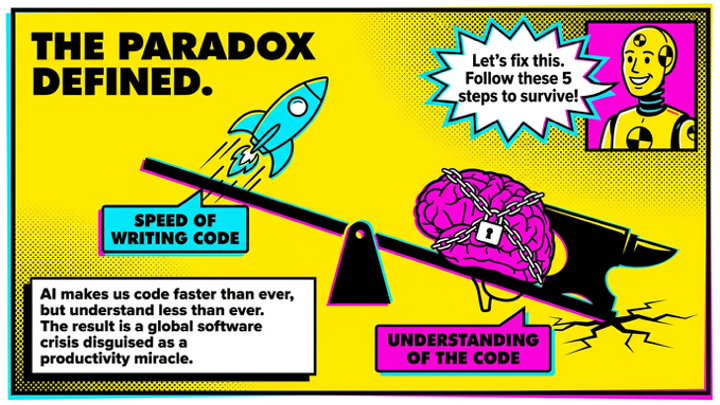

This is the paradox of AI-assisted software development: The cheaper code becomes, the more expensive understanding becomes.

And once understanding becomes the scarce resource, the whole system begins to behave differently.

The first illusion: speed

The first trap is obvious and powerful. AI makes developers feel faster.

That feeling is not fake. It is real at the level of local experience. There is less blank-page resistance. More surface area can be produced in less time. More tickets can be advanced. More things can be shown.

But the system-level question is different.

Faster at what?

Faster at generating code is not the same as faster at building software. It may not even be faster at building reliable software. Because every increase in generated volume creates a corresponding demand somewhere else: review, verification, integration, testing, explanation, maintenance, security, compliance, debugging, refactoring.

What disappears from the typing phase often reappears in the judgment phase.

And judgment does not scale as easily as generation.

You can multiply code with a model. You cannot multiply deep system understanding at the same rate.

That is where the illusion begins.

The second illusion: Demand will be satisfied

A second fantasy follows close behind the first. If AI makes code cheaper, surely demand will be absorbed. Teams will catch up. Backlogs will shrink. The machine will finally let us do all the things we never had time to do.

But cheaper production rarely ends demand. It usually unlocks more of it.

When the cost of generating software falls, the market does not become quiet. It becomes more ambitious. More features are requested. More workflows are digitized. More experiments are approved. More “nice to have” becomes “why not.”

The firehose does not relieve the system. It enlarges it.

This is why organizations can feel more productive and more overwhelmed at the same time.

They are not escaping the loop. They are deepening it and increasing demand.

The disappearing apprenticeship

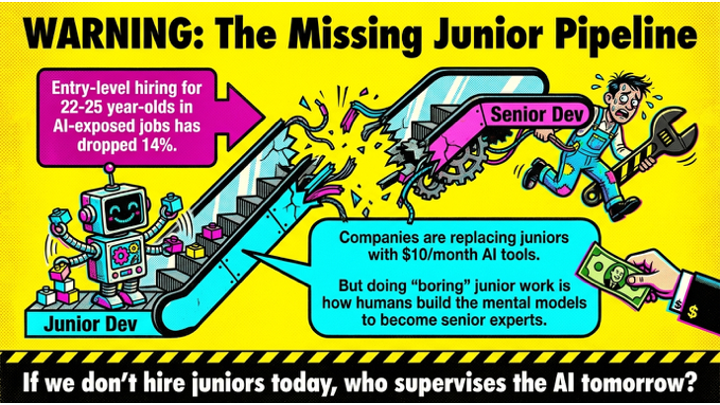

The most dangerous part of the paradox is not technical debt. It is developmental debt.

A profession survives by renewing itself.

Senior engineers do not arrive fully formed. They are not produced by credentials, nor by dashboards, nor by supervising generated output from a distance. They are formed through years of awkward contact with reality. Through boring tasks. Through broken builds. Through tracing ugly bugs. Through learning why a system resists neat abstractions. Through writing code that later embarrasses them. Through slowly earning the right to see more of the whole.

That learning path matters.

And yet it is exactly this layer that many organizations now treat as expendable. If AI can handle the boilerplate, why hire juniors? If the model can scaffold the routine pieces, why maintain the apprenticeship layer?

Because the apprenticeship layer was never only about output. It was about formation.

If you eliminate the junior pipeline in the name of efficiency, you do not merely reduce cost. You interrupt the replenishment of future judgment.

You keep the appearance of capability while silently dismantling its source.

The system may continue to move for a while. The current seniors are still present. The code still ships. The dashboards still glow. But the metabolism of mastery has already been damaged.

The craft is alive in form and degrading in substance.

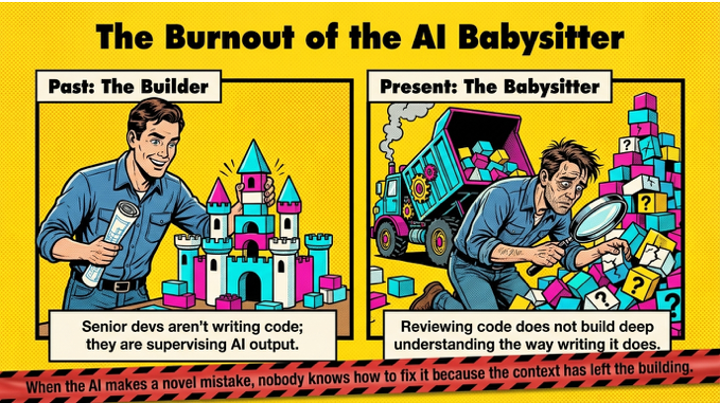

The rise of the babysitter

Something else changes too.

For many experienced engineers, the work becomes less like engineering and more like supervision. Less design, more filtration. Less creation, more babysitting.

This is not a sentimental complaint. It is a structural one.

The more “almost-right” output a system produces, the more cognitive energy must be spent validating it. Not just checking syntax. Interpreting intention. Hunting hidden assumptions. Verifying dependencies. Asking whether the generated answer fits the strange local logic of a specific organization, a specific domain, a specific historical architecture.

That work is tiring in a particular way.

It is tiring because it consumes expertise without renewing it.

To review weak output all day is not the same as to deepen one’s craft. It is possible to be highly occupied and slowly de-skilled at the same time. It is possible to become the guardian of a system one no longer feels allowed to shape.

And when that happens, the best people do not always wait for the collapse. Sometimes they leave early.

Not because they hate AI.

Because they can feel what kind of engineer the system is turning them into.

Why quality gatekeeping really matters

Every accelerating system needs a counterforce.

In AI-assisted software development, that counterforce is not optimism. It is not policy theatre. It is not another productivity dashboard.

It is quality gatekeeping.

Testing. Review. security controls. architecture scrutiny. formal checks. rollback discipline. code ownership. release friction where friction is deserved.

This is unpopular because it sounds regressive. In a culture intoxicated by acceleration, gatekeeping is easily framed as resistance. But in a system like this, gatekeeping is not bureaucracy. It is feedback.

It is the place where reality pushes back against fantasy.

Without it, technical debt remains invisible until it is catastrophic. With it, hidden deterioration becomes legible earlier. The organization is forced to confront a truth it would otherwise defer: that code volume and software quality are not the same variable.

Quality gatekeeping is not the final solution. It cannot restore the junior pipeline by itself. It cannot magically regenerate institutional memory. It cannot substitute for a healthier philosophy of engineering.

But it is the first serious intervention because it is the point where the system can still be slowed before it is broken.

It is where output is made answerable to consequence.

The real shift

The deeper lesson is not that AI should be rejected.

It is that software development must be re-understood.

We are moving into a world where generation is abundant and judgment is scarce. In such a world, the core capability of a software organization is no longer merely the ability to produce code. It is the ability to govern production without destroying the conditions of future understanding.

That means protecting apprenticeship.

It means preserving deep context.

It means refusing to confuse motion with capability.

It means treating review capacity as finite.

It means designing organizations around resilience, not only throughput.

Above all, it means remembering that software is not a pile of artifacts. It is a living relationship between people, systems, memory, and meaning.

When that relationship is weakened, code can still appear for a while.

And that is the paradox: we climb higher on the ladder of production, while the ground of judgment beneath us slowly gives way.

The more AI helps us produce software, the more carefully we must protect the human conditions that make software worth trusting.

Over the past months, I have spent an uncomfortable number of hours with AI-assisted software development. Not only prompting and generating, but watching, revising, comparing workflows, and trying to understand what actually changes when code becomes easy to produce. I followed how experienced developers structure their practice, studied the emerging rituals around AI-assisted work, and eventually stepped back to model the whole thing as a system.

What emerged was not a story not a story about productivity alone, but a deeper paradox: the easier code becomes to generate, the more fragile software development can become as a human capability.

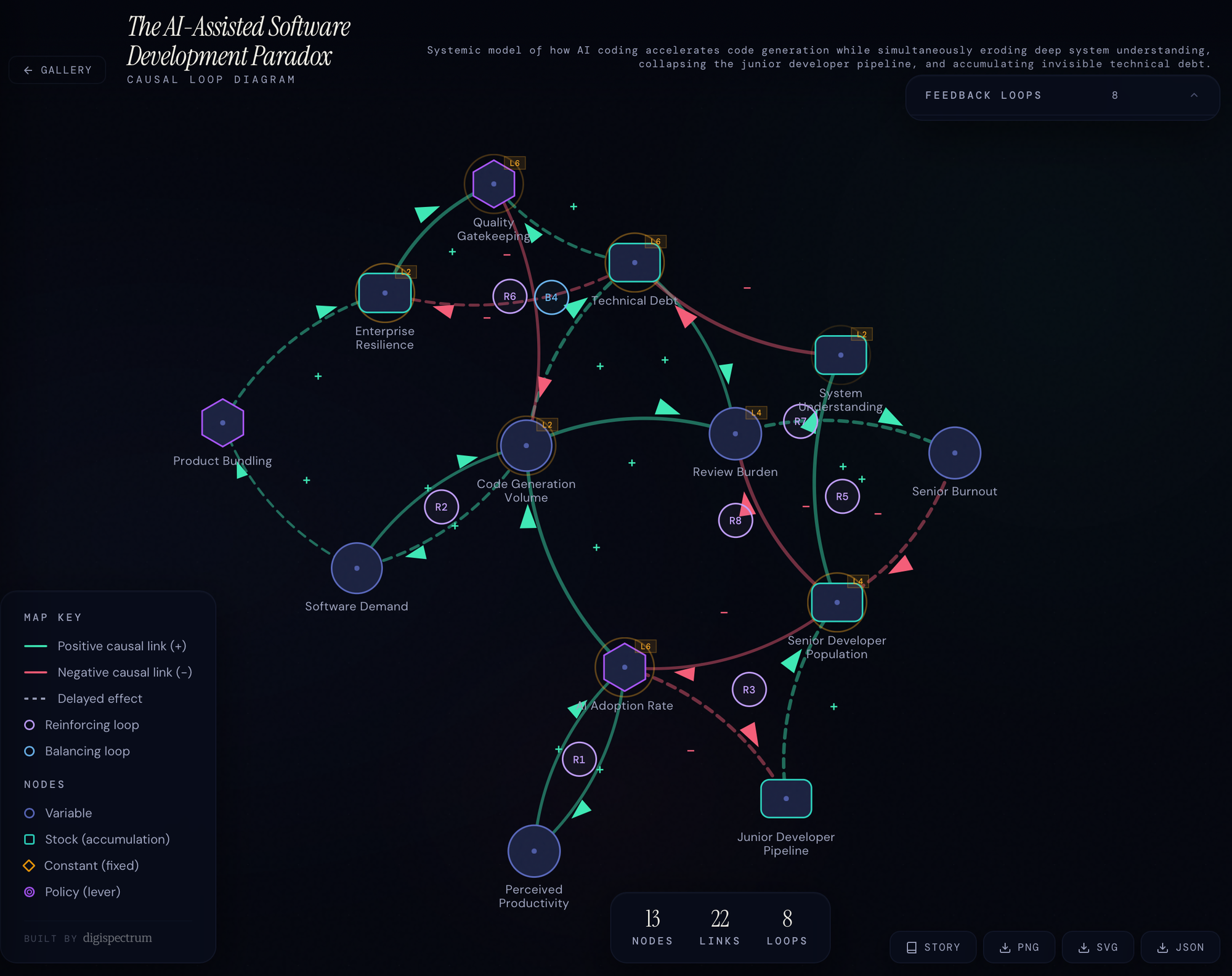

Systems thinking helped me see that more clearly. To understand the dynamics, I built an interactive causal loop diagram application: a way of tracing reinforcing loops, delays, the lone balancing force, and the deeper tensions beneath the surface of AI-assisted development. It made visible what the productivity narrative often hides and also shed light on the leverage points.

If this article resonates with you, or if you would like to see and discuss the underlying causal system, feel free to comment or contact me. I would be glad to continue the conversation.

Selected References

-

METR. Measuring the Impact of Early-2025 AI on Experienced Open-Source Developer Productivity. 2025.

https://metr.org/blog/2025-07-10-early-2025-ai-experienced-os-dev-study/ -

Becker, J. et al. Measuring the Impact of Early-2025 AI on Experienced Open-Source Developer Productivity. arXiv, 2025.

https://arxiv.org/abs/2507.09089 -

Stack Overflow. 2025 Developer Survey: AI. 2025.

https://survey.stackoverflow.co/2025/ai -

Stack Overflow. 2025 Developer Survey. 2025.

https://survey.stackoverflow.co/2025/ -

Anthropic. Labor market impacts of AI: A new measure and early findings. 2026.

https://www.anthropic.com/research/labor-market-impacts -

Anthropic. Estimating AI productivity gains from Claude conversations. 2025.

https://www.anthropic.com/research/estimating-productivity-gains -

Anthropic. Best Practices for Claude Code.

https://code.claude.com/docs/en/best-practices -

Garry Tan. For 25% of the Winter 2025 batch, 95% of lines of code are LLM generated. X post, 2025.

https://x.com/garrytan/status/1897303270311489931 -

TechCrunch. A quarter of startups in YC's current cohort have codebases that are almost entirely AI-generated. 2025.

https://techcrunch.com/2025/03/06/a-quarter-of-startups-in-ycs-current-cohort-have-codebases-that-are-almost-entirely-ai-generated/ -

Boris Cherny. How Boris Uses Claude Code. 2025.

https://howborisusesclaudecode.com/ -

Veracode. 2025 GenAI Code Security Report. 2025.

https://www.veracode.com/resources/analyst-reports/2025-genai-code-security-report/ -

Veracode. We Asked 100+ AI Models to Write Code. Here’s How Many Failed Security Tests. 2025.

https://www.veracode.com/blog/genai-code-security-report/ -

CodeRabbit. State of AI vs Human Code Generation Report. 2025.

https://www.businesswire.com/news/home/20251217666881/en/CodeRabbits-State-of-AI-vs-Human-Code-Generation-Report-Finds-That-AI-Written-Code-Produces-1.7x-More-Issues-Than-Human-Code -

Ravenna. Vibe Coding Security Risks in IT Workflows: What You Need to Know. 2026.

https://ravenna.ai/blog/vibe-coding-security-risks-it-workflows